13 Best ETL Tools

In the era of big data, businesses are inundated with information from a multitude of sources. This data, if harnessed correctly, can provide invaluable insights that drive strategic decision-making. However, the challenge lies in efficiently extracting, transforming, and loading (ETL) this data into a format that’s ready for analysis. ETL tools are the answer to this challenge. They are software specifically designed to support ETL processes, such as extracting data from disparate sources, scrubbing and cleaning data to achieve higher quality, and consolidating all of it into data warehouses. ETL tools simplify data management strategies and improve data quality through a standardized approach, making them an essential component of any data-driven organization.

What is an ETL Tool?

ETL, which stands for Extract, Transform, and Load, is a data integration process that combines data from multiple sources into a single, consistent data store that is loaded into a data warehouse or other target system. The process begins with the extraction of data from various sources, which could include databases, applications, or files. This raw data is then temporarily stored in a staging area.

In the transformation phase, the raw data is processed and prepared for its intended use. This could involve cleaning the data, removing duplicates, and converting it into a format that is compatible with the target system. The transformed data is then loaded into the target system, such as a data warehouse. This process is typically automated and well-defined, allowing for efficient and accurate data integration.

ETL is a crucial component of data warehousing and business intelligence, enabling organizations to consolidate their data in a single location for analysis and reporting. It provides a systematic and accurate way to analyze data, ensuring that all enterprise data is consistent and up-to-date. The ETL process has evolved over time, with modern ETL tools offering more advanced features and capabilities, such as real-time data integration and cloud-based data processing.

The Need for ETL Tools

In today’s data-driven world, the need for ETL tools is more pressing than ever. Businesses generate vast amounts of data daily, and manual ETL processes are no longer feasible. ETL tools automate the extraction, transformation, and loading processes, ensuring the data is accurate and ready for analysis. They break down data silos, making it easy for data scientists to access and analyze data, turning it into actionable business intelligence. ETL tools also improve data quality by removing inconsistencies and anomalies, and they simplify the data integration process, seamlessly combining data from various sources. This results in time efficiency, as the need to query multiple data sources is eliminated, speeding up decision-making processes.

How do ETL Tools work?

ETL tools work in three main stages: extraction, transformation, and loading. In the extraction stage, data is pulled from various sources, which could range from databases and applications to spreadsheets and cloud-based storage. This data is then transformed, which involves cleaning, validating, and reformatting the data to ensure it meets the necessary quality standards. The final stage is loading, where the transformed data is loaded into a data warehouse or another target system for storage and analysis. ETL tools automate this entire process, reducing errors and speeding up data integration. They also provide graphical interfaces for faster, easier results than traditional methods of moving data through hand-coded data pipelines.

13 Best ETL Tools

- Integrate.io

- Talend

- IBM DataStage

- Oracle Data Integrator

- Fivetran

- Coupler.io

- AWS Glue

- Stitch

- Skyvia

- Azure Data Factory

- SAS Data Management

- Google Cloud Dataflow

- Portable

How to choose the Best ETL Tools?

Choosing the right ETL tools depends on several factors. First, consider the complexity of your data requirements. A great ETL tool should be able to move and transform large amounts of data quickly and efficiently, with minimal effort. It should also support multiple data sources so that you can easily combine datasets from disparate sources. An intuitive user interface is key for quickly manipulating data, configuring settings, and scheduling tasks. Additionally, consider the scalability of the tool, the complexity of your data integration requirements, and your budget. Different organizations may have different needs, so the best ETL tool may vary depending on your specific situation and use cases.

ETL Tools (Free and Paid)

1. Integrate.io

Integrate.io is a leading data integration solution that provides a unified, low-code data warehouse integration platform. It offers a comprehensive suite of tools and connectors to support your entire data journey. With its user-friendly interface and robust functionality, Integrate.io empowers businesses to consolidate, process, and prepare data for analytics, thereby enabling informed decision-making.

What does Integrate.io do?

Integrate.io serves as a cloud based ETL tool that allows for the creation of visualized data pipelines for automated data flows across a wide range of sources and destinations. It provides a coding and jargon-free environment, making it accessible to both technical and non-technical users. Integrate.io facilitates the implementation of event-driven architecture, real-time data streaming, and API creation with minimal coding, addressing challenges such as inflexible data processing pipelines and scalability limitations.

Integrate.io Key Features

Easy Data Transformations: Integrate.io simplifies your ETL and ELT processes by offering a low-code, simple drag-and-drop user interface and over a dozen transformations – like sort, join, filter, select, limit, clone, etc.

Simple Workflow Creation for Defining Dependencies Between Tasks: This feature allows users to easily define the sequence and dependencies of data processing tasks, ensuring efficient and error-free data flow.

REST API: Integrate.io provides a comprehensive REST API solution, enabling users to create APIs with minimal coding and flexible deployment.

Salesforce to Salesforce Integrations: This feature allows users to extract Salesforce data, transform it, and inject it back into Salesforce, offering a unique advantage to enterprises that rely heavily on Salesforce data for CRM and other business operations.

Data Security and Compliance: Integrate.io ensures the security of your data with native encryption features and compliance with data protection regulations.

Diverse Data Source and Destination Options: Integrate.io supports a wide range of data sources and destinations, providing flexibility and versatility in data integration.

Integrate.io Pricing Plans

Integrate.io offers three main pricing plans: the Enterprise Plan, the Professional Plan, and the Starter Plan.

Enterprise Plan: This plan is designed for large businesses with extensive data integration needs. It offers advanced features and premium support. The pricing for this plan is custom and can be obtained by contacting Integrate.io directly.

Professional Plan: This plan, priced at $25,000 per year, is suitable for medium-sized businesses. It offers a balance of advanced features and affordability.

Starter Plan: This plan, priced at $15,000 per year, is ideal for small businesses or startups with basic data integration needs. It offers essential features at an affordable price.

Integrate.io accepts debit and credit cards, and bank wire transfer for payments.

2. Talend

Talend is a comprehensive data management solution that thousands of organizations rely on to transform data into actionable business insights. It is a flexible and trusted platform that supports end-to-end data management needs across an entire organization, from integration to delivery. Talend can be deployed on-premises, in the cloud, or in a hybrid environment, making it a versatile tool for any data architecture. It is designed to deliver clear and predictable value while supporting security and compliance needs.

What does Talend do?

Talend provides unified development and management tools to integrate and process all of your data. It is a software integration platform that offers solutions for data integration, data quality, data management, data preparation, and big data. Talend helps organizations make real-time decisions and become more data-driven by making data more accessible, enhancing its quality, and moving it quickly to target systems. It is the only ETL tool with all the plugins to integrate with the Big Data ecosystem easily.

Talend Key Features

Data Integration: Talend offers robust data integration capabilities. It provides a range of SQL templates to simplify the most common data query and update, schema creation and modification, and data management tasks.

Data Quality: Talend ensures the quality of data by providing features for data profiling, cleansing, and monitoring. It helps businesses improve the quality of their data, making it more accessible and quickly moved to target systems.

Data Governance: Talend supports data governance by providing features for data cataloging, data lineage, and data privacy. It helps organizations maintain compliance with data regulations and ensure the security of their data.

Low-Code Platform: Talend is a low-code platform that simplifies the process of developing data integration workflows. It provides a visual designer that makes it easy to create and manage data pipelines.

Scalability: Talend is designed to scale seamlessly as data needs grow. It can handle large volumes of data and complex data processing tasks, making it a future-proof investment for businesses.

Cloud and Big Data Integration: Talend supports integration with various cloud platforms and big data technologies. It provides connectors to packaged applications, databases, mainframes, files, web services, and more.

Talend Pricing Plans

Talend offers several pricing plans to cater to different business needs. The available plans include:

Data Management Platform: This plan offers comprehensive data integration and management features. It is designed for businesses that need to integrate, cleanse, and manage data from various sources.

Big Data Platform: This plan is designed for businesses that need to handle large volumes of data. It offers features for big data integration, data quality, and data governance.

Data Fabric: This is Talend’s most comprehensive plan. It combines the features of the Data Management Platform and Big Data Platform and adds additional capabilities for application and API integration.

For pricing information, users need to contact the sales team.

3. IBM DataStage

IBM DataStage stands as a robust and versatile ETL tool, designed to facilitate and streamline the process of data integration across various systems. Its capabilities are rooted in a powerful parallel processing architecture, which ensures scalability and high performance for data-intensive operations. As part of IBM Cloud Pak for Data as a Service, DataStage offers a comprehensive solution that supports a wide range of data integration tasks, from simple to complex. It is engineered to work seamlessly on-premises or in the cloud, providing flexibility to businesses in managing their data workflows. The platform’s enterprise connectivity and extensibility make it a suitable choice for organizations looking to harness their data for insightful analytics and AI applications, ensuring that they can deliver quality data reliably to stakeholders.

What does IBM DataStage do?

IBM DataStage excels in extracting data from multiple sources, transforming it to meet business requirements, and loading it into target systems, whether they are on-premises databases, cloud repositories, or data warehouses. It is designed to handle a vast array of data formats and structures, enabling businesses to integrate disparate data sources with ease. The tool’s powerful transformation capabilities allow for complex data processing, including data cleansing and monitoring, to ensure that the data delivered is of the highest quality. With its parallel processing engine, DataStage can efficiently process large volumes of data, making it an ideal solution for enterprises that deal with big data challenges. Additionally, its open and extensible nature allows for customization and integration with other AI and analytics platforms, providing a seamless data integration experience that supports a wide range of data-driven initiatives.

IBM DataStage Key Features

Parallel Processing: IBM DataStage leverages a high-performance parallel processing engine that allows for the efficient handling of large data volumes, significantly reducing the time required for data integration tasks.

Enterprise Connectivity: The tool offers extensive connectivity options, enabling seamless integration with a multitude of enterprise systems, databases, and applications, facilitating a unified data ecosystem.

Cloud Compatibility: DataStage is designed to run on any cloud environment, providing flexibility and scalability for businesses looking to leverage cloud resources for their data integration needs.

Data Cleansing and Monitoring: It includes features to cleanse and monitor data, ensuring that the information processed and delivered is accurate, consistent, and of high quality.

Extensibility: The platform is open and extensible, allowing for customization and integration with other data and AI tools, which enhances its capabilities to meet specific business requirements.

End-to-End Data Integration: DataStage provides a comprehensive solution for the entire data integration lifecycle, from extraction and transformation to loading, making it a one-stop-shop for all data integration activities.

IBM DataStage Pricing Plans

IBM DataStage offers a variety of pricing plans tailored to meet the needs of different organizations, from small businesses to large enterprises. Each plan is designed to provide specific features and capabilities, ensuring that businesses can select the option that best aligns with their data integration requirements and budget. Users need to contact the sales team for a pricing information meeting.

IBM DataStage accepts debit and credit cards for payments.

4. Oracle Data Integrator

Oracle Data Integrator (ODI) is an ETL tool and a comprehensive data integration platform that caters to a wide range of data integration needs. It is designed to handle high-volume, high-performance batch loads, event-driven, trickle-feed integration processes, and SOA-enabled data services. The latest version, ODI 12c, offers superior developer productivity and an improved user experience with a redesigned flow-based declarative user interface. It also provides deeper integration with Oracle GoldenGate, offering comprehensive big data support and added parallelism when executing data integration processes.

What does Oracle Data Integrator do?

Oracle Data Integrator is a strategic data integration offering from Oracle that provides a flexible and high-performance architecture for executing data integration processes. It is designed to handle high-volume, high-performance batch loads, event-driven, trickle-feed integration processes, and SOA-enabled data services. ODI 12c, the latest version, offers superior developer productivity and an improved user experience with a redesigned flow-based declarative user interface. It also provides deeper integration with Oracle GoldenGate, offering comprehensive big data support and added parallelism when executing data integration processes.

Oracle Data Integrator Key Features

High-Performance Architecture: Oracle Data Integrator offers a flexible and high-performance architecture that allows for efficient data integration processes. It supports high-volume, high-performance batch loads, event-driven, trickle-feed integration processes, and SOA-enabled data services.

Improved User Experience: The latest version, ODI 12c, provides an improved user experience with a redesigned flow-based declarative user interface. This interface enhances developer productivity and makes it easier to manage and execute data integration processes.

Deep Integration with Oracle GoldenGate: Oracle Data Integrator provides deeper integration with Oracle GoldenGate. This integration allows for comprehensive big data support and added parallelism when executing data integration processes.

Big Data Support: Oracle Data Integrator offers comprehensive big data support. It integrates seamlessly with big data platforms like Hadoop and Spark, enabling efficient processing and analysis of large datasets.

Collaborative Development and Version Control: Oracle Data Integrator offers features for collaborative development and version control. These features facilitate team-based ETL projects and ensure that all changes are tracked and managed effectively.

Robust Security Features: Oracle Data Integrator offers robust security features and integrates with existing security frameworks. This ensures data confidentiality and compliance with various data protection regulations.

Oracle Data Integrator Pricing Plans

Oracle Data Integrator offers a variety of pricing plans to cater to different user needs. The pricing is based on a per-core license model, with annual subscriptions ranging from several thousand to tens of thousands of dollars per year. The exact cost depends on the number of cores required, deployment options (cloud vs. on-premises), and additional features needed. For example, a basic cloud deployment with 2 cores could cost around $5,000 per year, while a larger on-premises deployment with 16 cores and advanced features could cost upwards of $50,000 per year.

Oracle Data Integrator accepts debit and credit cards, PayPal, and bank wire transfer for payments.

5. Fivetran

Fivetran is a leading automated data movement platform designed to streamline the process of data integration and centralization. It is a robust ETL tool that empowers businesses to achieve self-service analytics, build custom data solutions, and spend less time integrating systems. Fivetran is a perfect platform for engineers, analysts, and developers who are looking to centralize data for reports, analysis, and building with data.

What does Fivetran do?

Fivetran is a cloud-based data pipeline that automates the process of extracting data from various sources, transforming it into a usable format, and loading it into a data warehouse for analysis. It removes bottlenecks in data processes without compromising on compliance, making it an ideal solution for businesses that need to extend their data platform to support custom needs. Whether you’re an engineer looking to spend less time integrating systems, an analyst working with SQL or BI tools, or a developer building with data, Fivetran’s API and webhooks make it a versatile tool for all your data needs.

Fivetran Key Features

Automated Data Integration: Fivetran simplifies the process of data integration by automating the extraction, transformation, and loading of data from various sources into a data warehouse.

Self-Service Analytics: Fivetran enables businesses to achieve self-service analytics by removing bottlenecks in data processes, allowing for more efficient data analysis and decision-making.

Custom Data Solutions: With Fivetran, businesses can extend their data platform to support custom needs, providing flexibility and adaptability in managing data.

API and Webhooks: Fivetran offers API and webhooks, making it a perfect platform for developers building with data.

Compliance without Compromise: Fivetran ensures data compliance without compromising on the efficiency of data processes, providing businesses with peace of mind.

Support for Various User Types: Whether you’re an engineer, analyst, or developer, Fivetran caters to your data needs, making it a versatile tool for various user types.

Fivetran Pricing Plans

Fivetran offers four different pricing plans: Free Plan, Starter Plan, Standard Plan, and Enterprise Plan. For pricing of each plan, users need to contact the sales team.

Free Plan: The Free Plan is a basic offering that allows users to experience the core features of Fivetran.

Starter Plan: The Starter Plan includes everything in the Free Plan, with additional features and capabilities for more comprehensive data integration needs.

Standard Plan: The Standard Plan includes everything in the Starter Plan, plus unlimited users, 15-minute syncs, database connectors, and access to Fivetran’s REST API.

Enterprise Plan: The Enterprise Plan includes everything in the Standard Plan, plus enterprise database connectors, 1-minute syncs, granular roles & support for teams, advanced data governance, advanced security and data residency options, and priority support.

Fivetran accepts debit and credit cards for payments.

6. Coupler.io

Coupler.io is an all-in-one data analytics and automation platform designed to streamline the process of data collection, transformation, and automation. It empowers businesses to make data-driven decisions by providing a single point of truth between various data sources. With its user-friendly interface and robust functionality, Coupler.io simplifies the complex task of data analytics, allowing businesses to focus on deriving valuable insights from their data.

What does Coupler.io do?

Coupler.io serves as an integration tool that synchronizes data between various services on a schedule. It allows businesses to easily export and combine data from the apps they use, connecting their business applications to spreadsheets, worksheets, databases, or data visualization tools in minutes. Coupler.io offers over 200 integrations, enabling businesses to collect and analyze data in one place. It also provides a Transform module that allows users to preview, transform, and structure their data before moving it to the destination. Coupler.io also automates data management with webhooks, integrating importers into internal workflows to notify systems about execution refresh data in apps, or launch data imports automatically.

Coupler.io Key Features

Data Integration: Coupler.io provides a robust data integration feature that allows businesses to connect their applications to various data sources, enabling them to collect and analyze data in one place.

Data Transformation: With the Transform module, users can preview, transform, and structure their data directly within Coupler.io before moving it to the destination. This feature allows businesses to focus on the data that matters most to them.

Automation: Coupler.io automates data management with webhooks, integrating importers into internal workflows to notify systems about execution refresh data in apps, or launch data imports automatically.

Scheduling: Coupler.io provides scheduling options to automate the data refreshing process. Users can set specific intervals for the tool to automatically update the imported data, ensuring that reports or analyses are always up to date.

Support for Various Data Types: Coupler.io supports various data types, including numbers, dates, texts, and even images, providing flexibility in data handling.

Data Analytics Consulting Services: In addition to the data integration tool, Coupler.io offers data analytics consulting services, providing businesses with expert advice on how to best utilize their data.

Coupler.io Pricing Plans

Coupler.io offers four pricing plans to cater to different business needs.

Starter Plan: Priced at $64 per month, this plan is designed for 2 users. It includes all sources, 500 runs per month, and 10,000 rows per run. The data is automatically refreshed on a daily basis.

Squad Plan: This plan costs $132 per month and is suitable for 5 users. It includes all sources, 4,000 runs per month, and 50,000 rows per run. The data is automatically refreshed up to every 30 minutes.

Business Plan: At $332 per month, this plan is designed for unlimited users. It includes all sources, over 10,000 runs per month, and over 100,000 rows per run. The data is automatically refreshed up to every 15 minutes.

Enterprise Plan: For pricing and features of the Enterprise plan, businesses are advised to contact Coupler.io directly.

Coupler.io accepts debit and credit cards for payments.

7. AWS Glue

AWS Glue is a serverless data integration service that simplifies the process of discovering, preparing, and integrating data from multiple sources for analytics, machine learning, and application development. It supports a wide range of workloads and is designed to scale on demand, providing tailored tools for various data integration needs. AWS Glue is part of the Amazon Web Services (AWS) suite, offering a comprehensive solution for managing and transforming data at any scale.

What does AWS Glue do?

AWS Glue is designed to streamline the process of data integration. It discovers, prepares, moves, and integrates data from various sources, making it ready for analytics, machine learning, and application development. AWS Glue can initiate ETL jobs as new data arrives, for instance, it can be configured to run ETL jobs as soon as new data becomes available in Amazon Simple Storage Service (S3). It also provides a Data Catalog to quickly discover and search multiple AWS data sources.

AWS Glue Key Features

Data Integration Engine Options: AWS Glue offers different data integration engines to support various user needs and workloads. It can run ETL jobs event-driven, meaning it can initiate these jobs as soon as new data arrives.

AWS Glue Data Catalog: This feature allows users to quickly discover and search multiple AWS data sources. The Data Catalog is a persistent metadata store for all your data assets, regardless of where they are located.

No-Code ETL Jobs: AWS Glue provides the ability to manage and monitor data quality, and to create ETL jobs without the need for coding. This simplifies the process of data integration and transformation.

Scale on Demand: AWS Glue is designed to scale on demand, allowing it to support all workloads and adjust to the needs of the user.

Support for Git: AWS Glue integrates with Git, a widely used open-source version-control system. This allows users to maintain a history of changes to their AWS Glue jobs.

AWS Glue Flex: This is a flexible execution job class that allows users to reduce the cost of non-urgent workloads.

AWS Glue Pricing Plans

AWS Glue offers a variety of pricing plans based on the specific needs of the user. The pricing is primarily based on the resources consumed while jobs are running. Users need to contact the sales team for a pricing quote.

AWS Glue accepts debit and credit cards, PayPal, and bank wire transfer for payments.

8. Stitch

Stitch is a cloud-first, open-source platform designed to rapidly move data from various sources to a destination of your choice. As a powerful ETL tool, Stitch connects to a wide range of data sources, from databases like MySQL and MongoDB to SaaS applications like Salesforce and Zendesk. It’s designed to bypass the development workload, allowing teams to focus on building their core product and getting it to market faster. Stitch is not a data analysis or visualization tool, but it plays a crucial role in data movement, setting the stage for subsequent analysis using other tools.

What does Stitch do?

Stitch is a flexible, effortless, and powerful ETL service that connects to all your data sources and replicates that data to a destination of your choice. It’s designed to securely and reliably replicate data at any volume, allowing you to grow without worrying about an ETL failure. Stitch’s infrastructure is ideal for efficiently handling critical workloads and, with multiple redundant safeguards, protects against data loss in the event of an outage. It’s a world-class ETL SaaS solution that seamlessly flows data from multiple sources to a destination, providing a fast, cost-efficient, and hassle-free data integration experience.

Stitch Key Features

Automated Cloud Data Pipelines: Stitch offers fully automated cloud data pipelines, enabling teams to get insights faster and focus on building their core product.

Secure Data Movement: Stitch provides secure options for connections to all data sources and destinations, including SSL/TLS, SSH tunneling, and IP whitelisting, ensuring the safety of your data during transit.

Flexible Replication Configuration: With Stitch, you can configure your data replication process according to your needs, providing flexibility and control over your data movement.

Scalable and Reliable ETL: Stitch is designed to securely and reliably replicate data at any volume, allowing you to grow without worrying about an ETL failure.

Open-Source Platform: Stitch is an open-source platform, allowing developers to create and collaborate on integrations using a community-driven approach.

Support for Multiple Data Sources: Stitch supports a wide range of data sources, from databases like MySQL and MongoDB to SaaS applications like Salesforce and Zendesk, ensuring comprehensive data integration.

Stitch Pricing Plans

Stitch offers three pricing plans: Standard, Advanced, and Premium. Each plan is designed to cater to different data needs and comes with its own set of features.

Standard Plan: The Standard Plan is a flexible plan (starting at $1100 per month) that grows with your needs. It provides full access to over 100 data sources and is priced based on the volume of data above 5 million rows per month.

Advanced Plan: The Advanced Plan, priced at $1,250 per month, is designed for more demanding, enterprise-scale clients. It includes additional features and services not available in the Standard Plan.

Premium Plan: The Premium Plan, priced at $2,500 per month, is the most comprehensive offering from Stitch. It includes all the features of the Advanced Plan, along with additional premium features.

Stitch accepts debit and credit cards, PayPal, and bank wire transfer for payments.

9. Skyvia

Skyvia presents itself as a versatile cloud-based platform designed to address a variety of data management needs. It offers a comprehensive suite of tools for data integration, backup, and access across different cloud and on-premises data sources. With a focus on simplicity and ease of use, Skyvia aims to streamline complex data processes, making them accessible to both technical and non-technical users. Its no-code approach allows for quick setup and execution of data tasks, while still providing robust capabilities for those who require more advanced features.

What does Skyvia do?

Skyvia is a multifaceted tool that simplifies the process of integrating, backing up, and managing data across diverse environments. It enables users to connect a wide array of cloud applications, databases, and flat files without the need for extensive coding knowledge. Whether it’s migrating data between systems, synchronizing records across platforms, or setting up automated workflows, Skyvia provides a user-friendly interface to accomplish these tasks efficiently. Additionally, it offers capabilities for secure data backup and restoration, ensuring that critical business data is protected and easily recoverable.

Skyvia Key Features

Cloud Data Integration: Skyvia’s data integration service allows users to connect various data sources, such as SaaS applications, databases, and CSV files, and move data between them seamlessly. This includes support for all DML operations, such as creating, updating, deleting, and upserting records, ensuring that data remains consistent and up to date across different systems.

Backup and Recovery: The platform provides robust backup solutions for cloud data, ensuring that users can protect their information against accidental deletion or corruption. Recovery processes are straightforward, allowing for quick restoration of data when needed.

Data Management: With Skyvia, users can access and manage their data through a centralized interface. This includes querying, editing, and visualizing data from different sources without the need for direct interaction with the underlying databases or applications.

No-Code Interface: The platform’s no-code interface empowers users to perform complex data tasks without writing a single line of code. This democratizes data management, making it accessible to a broader range of users within an organization.

Flexible Scheduling: Skyvia offers flexible scheduling options for data integration tasks, allowing users to automate processes according to their specific requirements. This can range from running tasks once a day to nearly real-time synchronization, depending on the chosen plan.

Advanced Mapping and Transformation: Users can take advantage of powerful mapping features to transform data as it moves between sources. This includes splitting data, using expressions and formulas, and setting up lookups, which are essential for ensuring that the data fits the target schema.

Skyvia Pricing Plans

Skyvia offers a range of pricing plans to accommodate different user needs and budgets.

Free Plan: This plan is designed for users who require basic integration capabilities, offering 10,000 records per month with daily scheduling and two scheduled integrations.

Basic Plan: Aimed at small businesses or individual users, the Basic Plan, priced at $19 per month ($15 per month when billed annually), increases the number of records and scheduling options, providing more flexibility for regular data tasks.

Standard Plan: For organizations with more demanding integration needs, the Standard Plan, priced at $99 per month ($79 per month when billed annually), offers a higher number of records, more frequent scheduling, and additional features like advanced mapping and transformation tools.

Professional Plan: The Professional Plan is tailored for large enterprises that require extensive data integration capabilities, including unlimited scheduled integrations and the shortest execution frequency.

Skyvia accepts various payment methods, including debit and credit cards, and bank wire transfers, to accommodate users’ preferences.

10. Azure Data Factory

Azure Data Factory is a cloud-based data integration service that allows users to create, schedule, and orchestrate data workflows. It is designed to facilitate the movement and transformation of data across various data stores, both on-premises and in the cloud. With a focus on ease of use, it provides a visual interface for building complex ETL processes that can scale to meet the demands of big data workloads.

What does Azure Data Factory do?

Azure Data Factory enables businesses to integrate disparate data sources, whether they reside in various cloud services or on-premises infrastructure. It acts as the glue that brings together data from multiple sources, allowing for data transformation and analysis in a centralized, managed way. This service supports a variety of ETL and data integration scenarios, from simple data movement to complex data processing pipelines, and it is capable of handling large volumes of data efficiently.

Azure Data Factory Key Features

Data Integration Capabilities: Azure Data Factory offers robust data integration capabilities, allowing users to seamlessly connect to a wide array of data sources, including databases, file systems, and cloud services.

Visual Data Flows: The tool provides a visual interface for designing data-driven workflows, making it easier for users to set up and manage their data pipelines without the need for extensive coding.

Managed ETL Services: It delivers a fully managed ETL service, which means that users do not have to worry about infrastructure management, and can focus on designing their data transformation logic.

Support for Various Compute Services: Azure Data Factory integrates with various Azure compute services, such as Azure HDInsight and Azure Databricks, enabling powerful data processing and analytics.

Scheduling and Event-Driven Triggers: Users can schedule data pipelines or set them to run in response to certain events, which provides flexibility and ensures that data is processed in a timely manner.

Monitoring and Management Tools: The service includes tools for monitoring and managing data pipelines, giving users visibility into their data workflows and the ability to troubleshoot issues as they arise.

Azure Data Factory Pricing Plans

Azure Data Factory offers several pricing plans to accommodate different user needs and budget constraints. Users can calculate their custom plan using the Azure Data Factory pricing calculator.

Payments for Azure Data Factory can be made using debit and credit cards, PayPal, and bank wire transfer.

11. SAS Data Management

SAS Data Management stands as a comprehensive solution designed to empower organizations in their quest to manage and optimize data pipelines efficiently. It is a platform that caters to over 80,000 organizations, facilitating seamless data connectivity, enhanced transformations, and robust governance. The tool is engineered to provide a unified view of data across various storage systems, including databases, data warehouses, and data lakes. It supports connections with leading cloud platforms, on-premises systems, and multicloud data sources, streamlining data workflows and executing ELT with ease. SAS Data Management is recognized for its ability to ensure regulatory compliance, build trust in data, and offer transparency, positioning itself as a leader in data quality solutions.

What does SAS Data Management do?

SAS Data Management is a versatile tool that enables businesses to manage their data lifecycle comprehensively. It provides an intuitive, point-and-click graphical user interface that simplifies complex data management tasks. Users can query and use data across multiple systems without the need for physical reconciliation or data movement, offering a cost-effective solution for business users. The tool supports master data management with features like semantic data descriptions and sophisticated fuzzy matching to ensure data integrity. Additionally, SAS Data Management offers grid-enabled load balancing and multithreaded parallel processing for rapid data transformation and movement, eliminating the need for overlapping, redundant tools and ensuring a unified data management approach.

SAS Data Management Key Features

Seamless Data Connectivity: SAS Data Management excels in connecting disparate data sources, providing users with the ability to access and integrate data across various platforms without the hassle of manual intervention.

Enhanced Transformations: The tool offers advanced data transformation capabilities, enabling users to manipulate and refine their data effectively, ensuring it is ready for analysis and reporting.

Robust Governance: With SAS Data Management, organizations can enforce data governance policies, ensuring data quality and compliance with regulatory standards.

Unified Data View: It provides a comprehensive view of an organization’s data landscape, making it easier to manage and analyze data from a central point.

Low-Code Visual Designer: The platform includes a low-code, self-service visual designer that simplifies the creation and management of data pipelines, making it accessible to users with varying technical expertise.

Regulatory Compliance: SAS Data Management ensures that data handling processes meet industry regulations, helping organizations to maintain trust and transparency in their data management practices.

SAS Data Management Pricing Plans

SAS Data Management offers customized pricing plans tailored to the specific needs of organizations. To understand the full range of pricing options and the features included in each plan, interested parties are encouraged to request a demo.

12. Google Cloud Dataflow

Google Cloud Dataflow is a fully managed service that simplifies the complexities of large-scale data processing. It offers a unified programming model for both batch and stream processing, meaning it can handle both the processing of stored historical data as well as real-time data as it’s generated. As part of the Google Cloud ecosystem, Dataflow integrates seamlessly with other services like BigQuery, Pub/Sub, and Cloud Storage, providing a comprehensive solution for ETL tasks, real-time analytics, and computational challenges. Its serverless approach means that users do not have to manage the underlying infrastructure, allowing them to focus on the analysis and insights rather than the operational aspects of their data pipelines.

What does Google Cloud Dataflow do?

Google Cloud Dataflow is designed to provide a scalable and serverless environment for data processing tasks. It enables users to create complex ETL, batch, and stream processing pipelines that can ingest data from various sources, transform it according to business logic, and then load it into analytics engines or databases for further analysis. Dataflow’s ability to handle both batch and real-time data makes it versatile for a wide range of use cases, from real-time fraud detection to daily log analysis. The service abstracts away the provisioning of resources, automatically scaling to meet the demands of the job, and provides a suite of tools for monitoring and optimizing pipelines, ensuring that data is processed efficiently and reliably.

Google Cloud Dataflow Key Features

Unified Stream and Batch Processing: Dataflow offers a single model for processing both streaming and batch data, which simplifies pipeline development and allows for consistent, more manageable code.

Serverless Operation: Users can focus on coding rather than infrastructure, as Dataflow automatically provisions and manages the necessary resources.

Auto-scaling: The service scales resources up or down based on the workload, ensuring efficient processing without over-provisioning.

Integration with Google Cloud Services: Dataflow integrates with BigQuery, Pub/Sub, and other Google Cloud services, enabling seamless data analytics solutions.

Built-in Fault Tolerance: Dataflow ensures consistent and correct results, regardless of the size of the data or the complexity of the computation, by providing built-in fault tolerance.

Developer Tools: It offers tools for building, debugging, and monitoring data pipelines, which helps in maintaining high performance and reliability.

Google Cloud Dataflow Pricing Plans

Google Cloud Dataflow offers a variety of pricing plans tailored to different usage patterns and budgets. The pricing is based on the resources consumed by the jobs, such as CPU, memory, and storage, and is billed on a per-second basis, providing granular control over costs. Users need to contact the sales team for pricing plans information:

Dataflow Shuffle: This feature is priced based on the volume of data processed during read and write operations, which is essential for operations that involve shuffling large datasets.

Confidential VM Pricing: Dataflow offers confidential VMs at a global price, ensuring that costs are predictable and do not vary by region.

Complementary Resources: While Dataflow jobs may consume resources like Cloud Storage, Pub/Sub, and BigQuery, these are billed separately according to their specific pricing.

Dataflow Prime: For users requiring advanced features and optimizations, Dataflow Prime is available, which includes additional pricing for features like Persistent Disk, GPUs, and snapshots.

Payment for Google Cloud Dataflow services can be made using debit and credit cards, PayPal, and bank wire transfers, offering flexibility in payment methods.

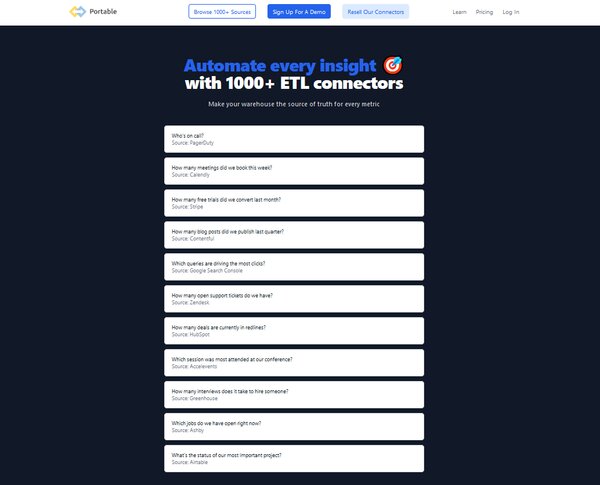

13. Portable

Portable is a cloud-based ETL tool designed to streamline the process of data integration for businesses. It simplifies the complex and often technical aspects of data pipelines, making it accessible for users without extensive coding knowledge. Portable’s platform is engineered to manage the entire ETL process, which includes extracting data from various sources, transforming it to fit operational needs, and loading it into a destination system for analysis and business intelligence. This tool is particularly beneficial for organizations looking to automate their data workflows and leverage cloud infrastructure to handle data extraction, in-flight data transformation, and data loading without the need for maintaining their own infrastructure.

What does Portable do?

Portable provides a no-code solution for creating data pipelines, enabling users to connect to over 500 data sources. It is designed to handle the intricacies of data transfer logic, such as making API calls, processing responses, handling errors, and rate limits. Portable also takes care of in-flight data transformation by defining data types, creating schemas, and ensuring join keys exist, as well as organizing unstructured data for downstream needs. The platform is suitable for businesses of all sizes that require a reliable and scalable solution for integrating their data across various systems and platforms, whether for analytics, reporting, or operational purposes.

Portable Key Features

Over 500 Data Connectors: Portable offers an extensive range of ETL connectors, allowing businesses to integrate data from a wide variety of sources seamlessly.

Cloud-Based Solution: As a cloud-based ETL tool, Portable is hosted on the provider’s servers, which means users can access the service from anywhere and do not need to worry about infrastructure maintenance.

No-Code Interface: The platform provides a user-friendly, no-code interface that makes it easy for non-technical users to set up and manage data pipelines.

Custom Connector Development: For unique data sources, Portable allows the development of custom connectors, providing flexibility and control over data integration.

Flat Fee Pricing Model: Portable adopts an attractive flat fee pricing model, making it easier for businesses to predict their expenses without worrying about data volume caps.

Real-Time Data Transformation: The ability to perform real-time data transformation is another key feature, ensuring that data is always up-to-date and accurate for decision-making processes.

Portable Pricing Plans

Portable offers three main pricing plans to accommodate different business needs:

Starter Plan: This plan, priced at $200 per month, is designed for those just getting started with data integration, offering 1 scheduled data flow and features like unlimited data volumes, freshness fields, and flow scheduling every 24 hours.

Scale Plan: Aimed at growing businesses, the Scale Plan, priced at $1,000 per month, includes up to 10 scheduled data flows, more frequent flow scheduling every 15 minutes, and upcoming features like multi-user accounts and webhook notifications.

Growth Plan: For enterprises with extensive data integration needs, the Growth Plan provides 10+ scheduled data flows, near real-time flow scheduling, and additional upcoming features such as admin API access.

Portable accepts various payment methods, including debit and credit cards, PayPal, and bank wire transfers, providing flexibility for users in managing their subscriptions.

FAQs on ETL Tools

What is an ETL Tool?

An ETL tool is a software application used to extract, transform, and load data from various sources into a data warehouse or other target system. These tools automate the process of data integration, ensuring data quality and consistency, and reducing the time and effort required to prepare data for analysis.

Why are ETL Tools Important?

ETL tools are crucial in today’s data-driven world as they automate the process of extracting data from various sources, transforming it into a standardized format, and loading it into a data warehouse. This automation not only saves time and resources but also improves data quality and consistency, enabling businesses to make data-driven decisions more efficiently.

How do ETL Tools Work?

ETL tools work by extracting data from various sources, transforming it to meet the necessary quality standards, and then loading it into a data warehouse or other target system. They automate this entire process, reducing errors and speeding up data integration.

What are the Key Features of ETL Tools?

Key features of ETL tools include support for multiple data sources, an intuitive user interface for easy data manipulation, and scalability to handle large volumes of data. They should also provide data quality and profiling capabilities, support for both cloud and on-premises data, and be cost-effective.

What are the Challenges in Using ETL Tools?

While ETL tools offer numerous benefits, they also present some challenges. These include the need for technical expertise to set up and manage the tools, handling diverse data sources, and ensuring data security during the ETL process.

What Types of ETL Tools are Available?

There are several types of ETL tools available, including open-source tools, cloud-based services, and enterprise software. The choice of tool depends on the specific needs and resources of the organization.

How to Choose the Right ETL Tool?

Choosing the right ETL tool depends on several factors, including the complexity of your data requirements, the volume of data you need to process, the types of data sources you’re working with, and your budget. It’s also important to consider the tool’s user interface, scalability, and support services.

Can Non-Technical Users Use ETL Tools?

Yes, many ETL tools come with graphical user interfaces that make them accessible to non-technical users. However, a basic understanding of ETL processes and data management principles is beneficial.

What is the Future of ETL Tools?

The future of ETL tools lies in their ability to handle increasingly complex data landscapes, including real-time data streams and diverse data sources. Advances in AI and machine learning are also expected to enhance the capabilities of ETL tools, making them even more efficient and effective.

Are ETL Tools Only Used for Data Warehousing?

While ETL tools are commonly used in data warehousing, they are not limited to this application. They can also be used for data migration, data integration, and data transformation tasks in various other contexts.

Conclusion

ETL tools play a pivotal role in today’s data-driven business environment. They streamline the process of extracting, transforming, and loading data, making it ready for analysis and decision-making. With their ability to handle diverse data sources and large volumes of data, ETL tools are indispensable for any organization that aims to leverage its data effectively. As technology continues to evolve, we can expect ETL tools to become even more powerful and versatile, further enhancing their value to businesses.

In the world of big data, ETL tools are the unsung heroes. They work behind the scenes, ensuring that data is clean, consistent, and ready for analysis. By automating complex data management tasks, they free up time and resources, enabling businesses to focus on what really matters – using their data to drive strategic decisions. As we move forward, the importance of ETL tools is only set to increase, making them a key component of any successful data strategy.